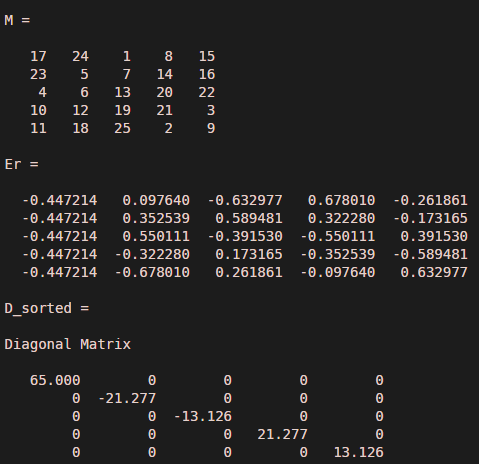

Note that it is customary to sort the principal component by their eigenvalues. The eigenvectors of the covariance matrix V are the principal components (same as PC above, although the sign can be inverted), and the corresponding eigenvalues E represent the amount of variance explained (same as latent).

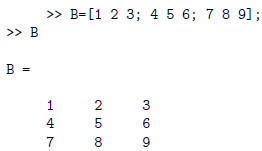

We can compute the PCA of the data ourselves without using PRINCOMP: = eig( cov(X) ) This is returned by the third output of PRINCOMP ( latent). We can compute this simply as X*PC which is the exactly what is returned in the second output of PRINCOMP ( score), to confirm this try: > all(all( abs(X*PC - score) < 1e-10 ))įinally the importance of each principal component can be determined by how much variance of the data it explains. Therefore to express the same data in the new coordinates system formed by the principal components, the new first dimension should be a linear combination of the original ones according to the above formula. Lets look at the first returned principal component (1st column of PC matrix): > PC(:,1) X = meas, mean(meas)) %# so that mean(X) is the zero vector MATH 267 Eigenvalues and eigenvectors in MATLAB The command > > v,deig(A) returns eigenvalues and eigenvectors of the matrix A. To make things easier to understand, I am first zero-centering the data before calling PCA function: load fisheriris

Lets consider the Fisher-Iris dataset comprising of 150 instances and 4 dimensions, and apply PCA on the data. Perhaps an example might clear up any misunderstanding you have. This is demonstrating in the MATLAB code below.With PCA, each principle component returned will be a linear combination of the original columns/dimensions. Going through the same process for the second eigenvalue:Īgain, the choice of the +1 and -2 for the eigenvectors was arbitrary only their ratio is essential. If we didn't have to use +1 and -1, we have used any two quantities of equal magnitude and opposite sign. In this case, we find that the first eigenvector is any 2 component column vector in which the two items have equal magnitude and opposite sign. Let's find the eigenvector, v 1, connected with the eigenvalue, λ 1=-1, first. Example: Find Eigenvalues and Eigenvectors of the 2x2 MatrixĪll that's left is to find two eigenvectors. For each eigenvalue, there will be eigenvectors for which the eigenvalue equations are true. We will only handle the case of n distinct roots through which they may be repeated. These roots are called the eigenvalue of A. This equation is called the characteristic equations of A, and is a n th order polynomial in λ with n roots. I heard maple can solve this problem but I am amateur on maple.

here is the code is matlab format: I want a numerical result. If vis a non-zero, this equation will only have the solutions if I have a problem to calculate eigenvalues of a symbolic matrix. The eigenvalues problem can be written as The vector, v, which corresponds to this equation, is called eigenvectors. It is also called the characteristic value. Any value of the λ for which this equation has a solution known as eigenvalues of the matrix A. In this equation, A is a n-by-n matrix, v is non-zero n-by-1 vector, and λ is the scalar (which might be either real or complex). Next → ← prev Eigenvalues and EigenvectorsĪn eigenvalues and eigenvectors of the square matrix A are a scalar λ and a nonzero vector v that satisfy

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed